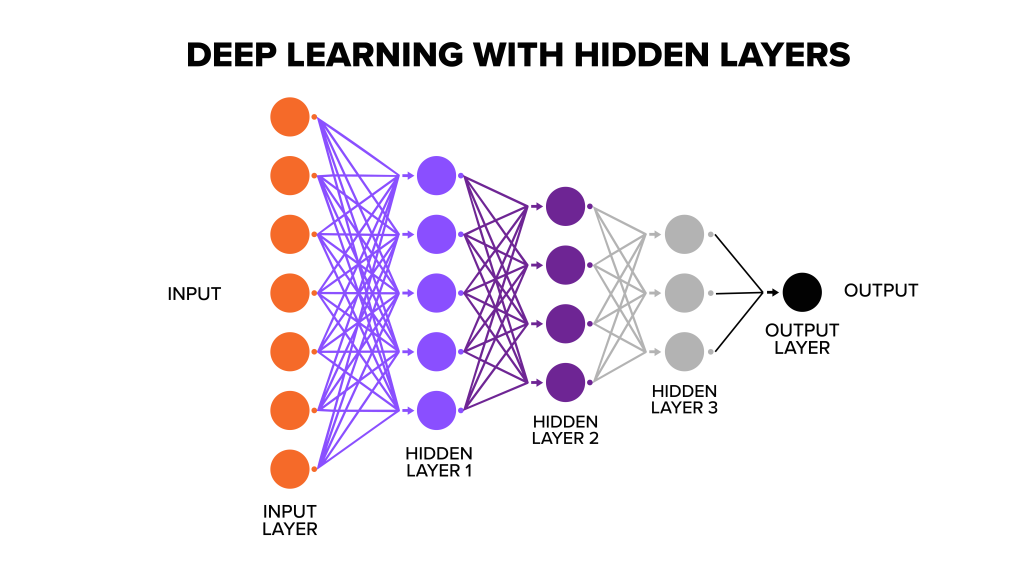

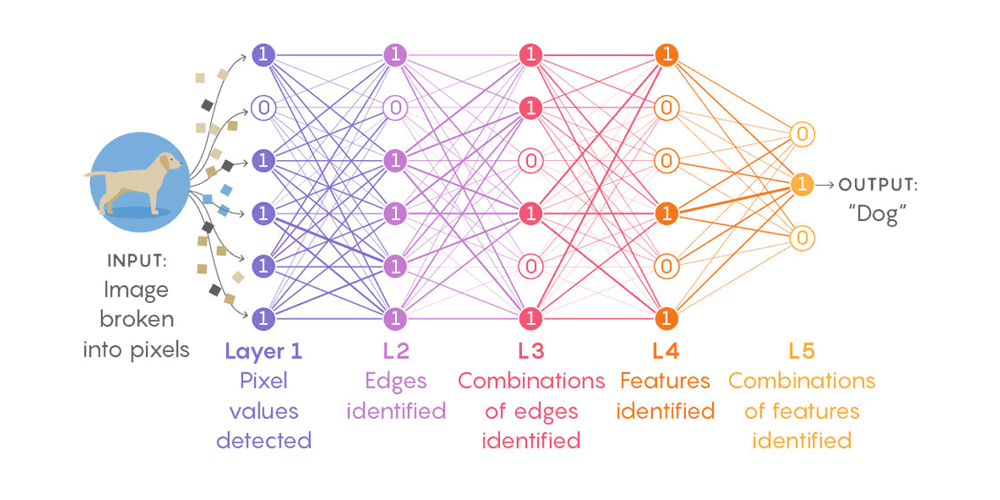

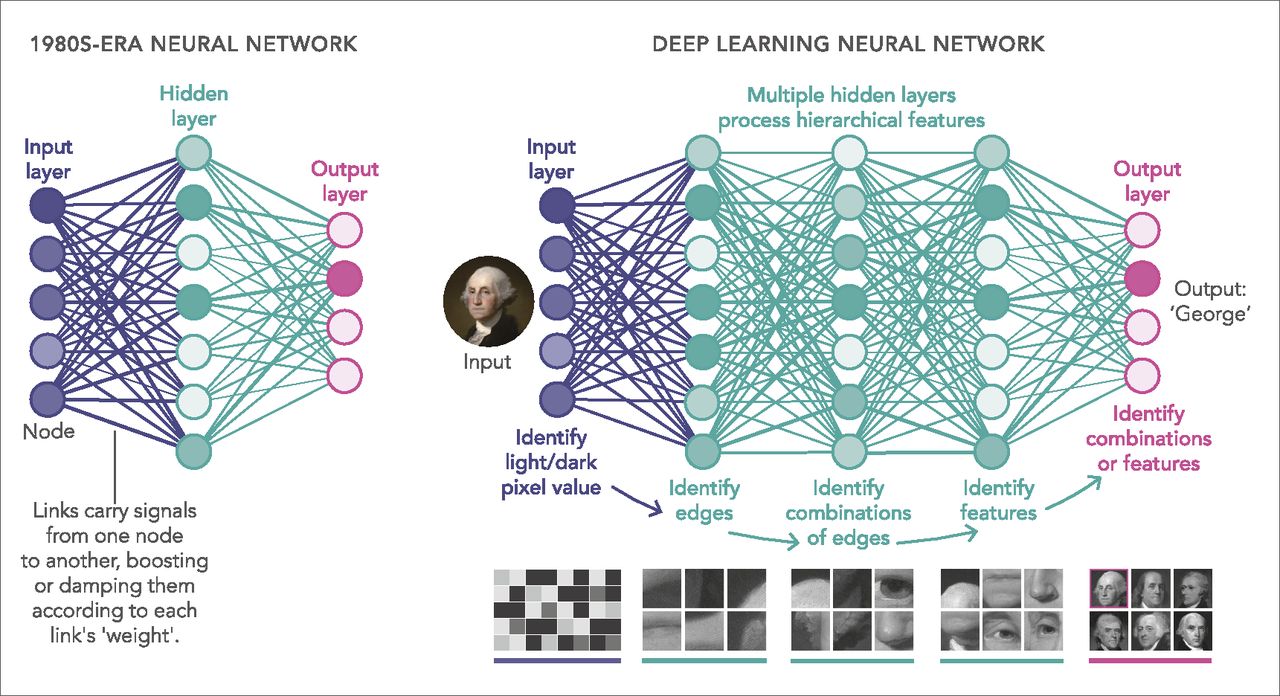

Deep learning as interpolationįrom a biological interpretation, humans process images of the world by hierarchically interpreting them bit by bit, from low-level features like edges and contours to high-level features like objects and scenes. Therefore, deep learning is learning a map from input text to output classes: neutral or positive or negative. Similarly, input is word sequence and output is whether the input sentence has a positive/neutral/negative sentiment. For example, if the input is an image of a lion and output is the image classification that the image belongs to the class of lions, then deep learning is learning a function that maps image vectors to classes. But effectively, what deep learning is a complex composition of functions from layer to layer, thereby finding the function that defines a mapping from input to output. It has been shown that through this composition, we can learn any non-linear complex function.ĭeep learning is a neural network with many hidden layers (usually identified by > 2 hidden layers). This way, each layer is composed of functions from the previous layer (something like h(f(g(x)))).

These values are again combined, in the next layer: h(f1, f2, …, fn) and so on. Therefore, each layer has a set of learned functions:, which are called as hidden layer values. Now that each neuron is a nonlinear function, we stack several such neurons in a “layer” where each neuron receives the same set of inputs but learn different weights W. Now, depending on the distribution of points in 2D space, we find the optimum value of m & c which satisfies some criteria: the difference between predicted y and actual points is minimal for all data points. As an example, in y = mx+c, we have 2 weights: m and c. Learning in neural networks is nothing but finding the optimum weight vector W. (commonly used in the deep learning community).

W*X can be visualized as a line (being learned) in high-dimensional space (hyperplane) and g(.) can be any non-linear differentiable function like sigmoid, tanh, ReLU, etc. Each neuron learns a limited function: f(.) = g(W*X) where W is the weight vector to be learned, X is the input vector and g(.) is a non-linear transformation. Let’s see why neural networks are considered to be universal function approximators. MetaBeat will bring together thought leaders to give guidance on how metaverse technology will transform the way all industries communicate and do business on October 4 in San Francisco, CA.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed